The Operations Platform Purpose-Built for AI/HPC Data Centers

Liquid cooling, accelerated AI/HPC buildouts, and rising rack power densities demand more than legacy CMMS or DCIM. MCIM unifies asset intelligence, guided execution, and real-time coordination so operators can maintain uptime under the most demanding conditions.

AI/HPC Workloads Are Redefining Operational Rules

As thermal loads rise and liquid cooling becomes standard, small inefficiencies become expensive, and tribal knowledge becomes a reliability risk. Operators are being asked to run environments their tools were never designed for.

Escalating thermal loads

GPU clusters push 50–100+ kW per rack, requiring tighter monitoring and faster response.

Fragmented systems

Jira, spreadsheets, and site-specific tools create blind spots when seconds matter.

Rapid scaling pressure

Adding 20 MW every few weeks leaves no room for manual processes or tribal knowledge.

No standardized execution

Non-standard MOPs/SOPs create silent degradation and compliance risk.

Power Density Progression

At 100+ kW, air cooling hits physics limits. Liquid cooling introduces new operational risk and new requirements.

Liquid Cooling Solves Heat. It Also Introduces New Failure Modes.

Direct-to-chip cooling, coolant chemistry, leak detection, and vendor variation create operational complexity that legacy CMMS platforms were never designed to handle.

Why MCIM

Make Every Center Stronger.

One operational system

One operational system for assets, procedures, incidents, and planning. No more stitching together Jira, spreadsheets, and site tools.

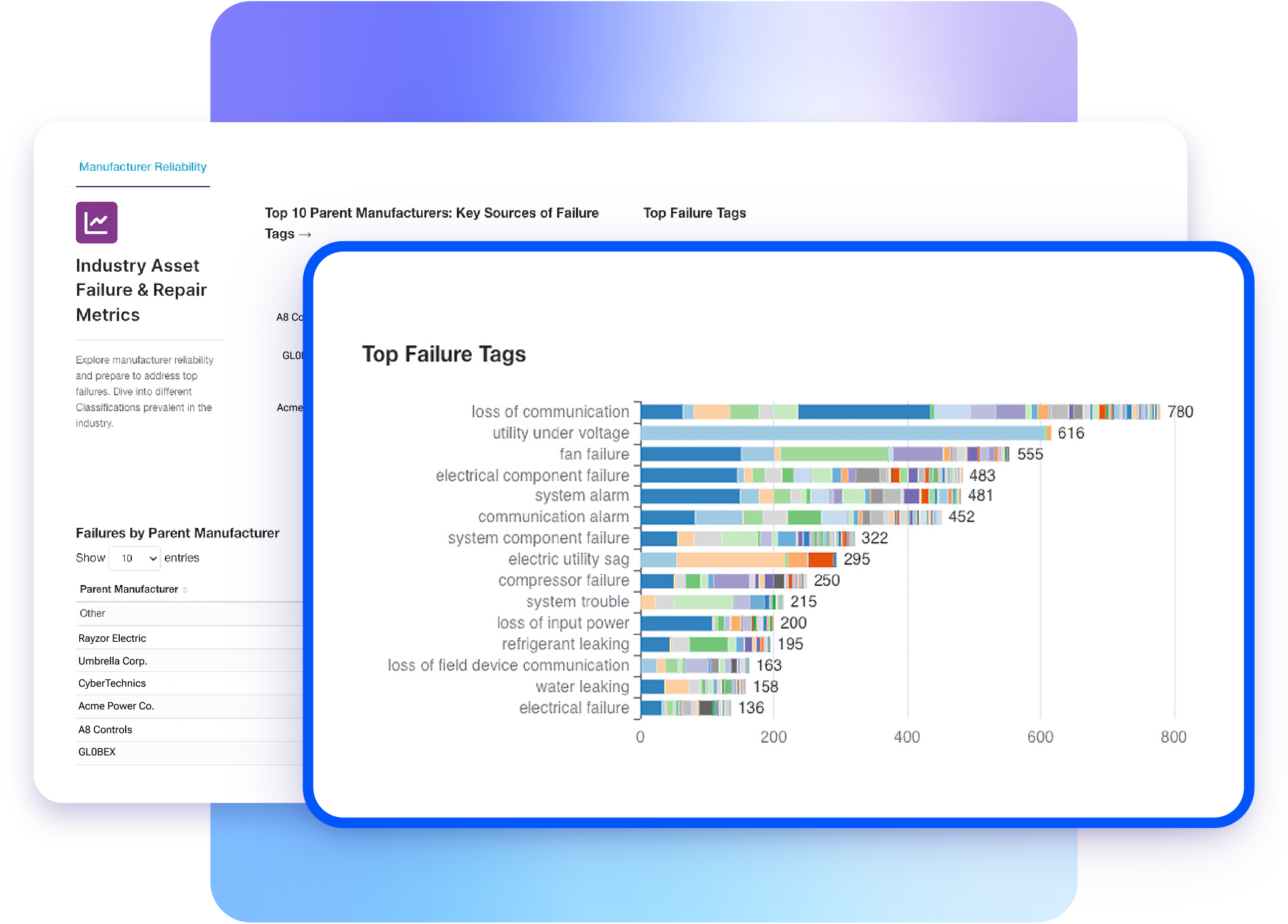

Clean, first-party data

Clean, first-party data with real-time analytics that surface thermal drift, procedural gaps, and early warning signals.

A dedicated partner

A partner dedicated to AI/HPC operations with white-glove implementation and operator-first onboarding.

MCIM makes every center stronger, especially when density and cooling architectures change.

The Operational System Purpose-Built for AI/HPC

Engineered specifically for managing mission-critical data center infrastructure.

Guided Execution

Version-controlled MOPs/SOPs for liquid-cooled, high-voltage environments.

Incident Management

Real-time coordination and chain-of-custody evidence.

Rounds Monitoring

Structured readings to detect thermal drift before it escalates.

Asset Management

Clean asset data as the foundation for reliability at scale.

Capital Planning

Lifecycle-driven planning for retrofits and upgrades.

Executive Reporting

One source of truth replacing fragmented dashboards.

From Crypto to 700 MW of AI/HPC.

A leading infrastructure operator needed to transition from crypto mining to enterprise-grade AI/HPC operations while deploying 20 MW every three weeks. With no central CMMS and data scattered across Jira and spreadsheets, they needed an operational system built for AI/HPC, not a retrofitted tool.

"Out of the box, this looks like it would have every single piece of functionality we would almost want from a system like this. This would end up ultimately replacing all of that and bringing everything into one system."VP of Operations, MCIM Customer

Single pane of glass

Replaced Jira and site-specific tools with one unified platform for exec reporting and team collaboration.

White-glove implementation

MCIM digitized MOPs/SOPs and cleaned asset data, saving weeks of work for the operations team.

Scalable site rollout

Started at 8 MW at a single site, then expanded to four locations with no re-implementation required.

Moving from Crypto to AI/HPC? Operational readiness will fuel your transition.

Power and uptime discipline transfers, but AI/HPC demands tighter procedures, denser deployments, and new cooling workflows. MCIM gets you there faster.

Clean Asset Baseline

Standardized asset inventory across all sites, the nucleus of every operational decision.

Digitize Procedures

Version-controlled digital MOPs/SOPs ready for liquid-cooled environments.

Standardize Rounds

Structured monitoring optimized for high-density rack monitoring and thermal drift detection.

Plan Upgrades

Use lifecycle data and capital planning to sequence retrofits without sacrificing uptime.

Make AI/HPC Operations Repeatable, Auditable, and Scalable.

Unify asset intelligence, standardize execution, and reduce thermal and maintenance risk as you scale density.