Data centers have unprecedented visibility, and sometimes, unprecedented noise. MCIM CEO Mike Parks outlines the five stages of operational maturity we’ve defined and how governance turns signals into actionable intelligence.

The industry often talks about data center operators drowning in telemetry, but the reality is actually much different. Most organizations are drowning in fragmentation.

Over the past two decades, portfolios expanded through construction, acquisitions, and new generations of infrastructure technology. Each phase introduced new building management systems, EPMS platforms, OEM tooling, and alarm architectures, which brings:

- Different vendors

- Different alarm languages

- Different thresholds

- Different operational workflows

What organizations gained in visibility, they often lost in operational coherence. More signals don’t automatically create more intelligence.

Across portfolios, this shows up in how signals are handled day to day. In some environments, alarms are interpreted manually and require constant human intervention. In others, signals are structured, correlated, and tied directly to execution. In the most advanced environments, data informs decisions before issues fully develop. At scale, performance is shaped by operational maturity.

Operational Maturity Matters

From our work across global data center portfolios, we’ve defined five distinct stages of operational maturity that consistently show up in practice:

Level 1: Reactive/Fragmented

Site-based alarms, OEM tools, and manual triage dominate. Signals are noisy, alarms rarely map cleanly to work execution, and maintenance activities and spend are relatively equal across planned and reactive work.

Level 2: Centralized Visibility

A global command center or NOC aggregates alarms across sites. Operators gain portfolio visibility, but humans remain the primary triage engine and correlation remains limited.

Level 3: Normalized & Automated Triage

Alarm ingestion is centralized and normalized across systems. Parent-child relationships suppress cascading events, rules automate triage, and known conditions automatically generate work and inform technicians in the field during the flow of work.

Level 4: Condition-Based Operations

Telemetry, alarms, EPMS, and DCIM signals are correlated. Fault detection focuses on the P-F interval, planned maintenance dominates operational activity, and alarm conditions are validated against work execution in a closed loop.

Level 5: Optimized & Predictive

Advanced analytics and machine learning operate on clean operational data. Asset performance informs capital planning, reliability is continuously optimized, and digital twin models become operationally useful.

Most organizations believe they operate at Levels 3 or 4. In reality, many portfolios are still operating between Levels 1 and 2. That gap is where operational noise, inefficiency, and risk accumulate.

Start With the Outcome

One of my favorite observations about data is “If you interrogate it long enough, it will confess to anything,” meaning, if you don’t define what you’re trying to accomplish before you start collecting signals, the data will eventually tell whatever story you want it to tell.

Across complex infrastructure environments, it’s common to see large volumes of alarms, alerts, and telemetry generated daily. The vast majority of those signals are informational. A very small percentage correlate to events that materially impact performance, reliability, or financial outcomes.

The challenge isn’t simply filtering noise. It’s establishing the governance layer that determines which signals matter in the first place. When outcomes are clearly defined, whether that’s maintenance cost per kW, mean time to failure, SLA adherence, or asset-level performance, telemetry can be structured around those targets, with prioritized signals, enforced workflows, and actions that can be measured against impact. Without that discipline, data tends to accumulate faster than insight.

Without that governance layer, organizations remain stuck in the early stages of operational maturity. Signals accumulate, but decisions do not improve. Operational intelligence only emerges once telemetry is structured around outcomes and tied directly to governed workflows.

Visibility Isn’t the Same as Context

Most large portfolios grew through expansion and acquisition. Over time, that creates multiple generations of building management systems, EPMS platforms, OEM tooling, and alarm architectures. Each system was configured by a different team at a different time, solving for a local need, not a unified portfolio model.

On paper, you’ve got visibility. In practice, it means operators are asking human beings to interpret disparate alarm strings from multiple systems across hundreds of facilities and make the right decision in real time. One site might classify a temperature deviation as critical, another flags it as informational, and a third suppresses it entirely, creating concerning levels of inconsistency.

The human brain isn’t built to correlate thousands of disjointed signals under pressure. Without contextualization and governance, more telemetry just increases cognitive load and slows the moment that actually matters: the decision.

Alarm Fatigue Is a Governance Problem

When teams are required to touch nearly every alarm, they stop trusting the system: panels get bypassed. Thresholds get ignored. Human backstops get layered on top of broken processes. Global command centers call sites simply to document awareness. Activity gets mistaken for control. Meanwhile, the root cause is often buried under a cascade of secondary alarms generated by systems that don’t understand each other.

Alarm fatigue is rarely a technology problem. It’s almost always a maturity problem.

In Level 1 and Level 2 environments, alarms function primarily as notifications. Humans must interpret them, correlate them, and determine the appropriate action. As signal volume grows, the cognitive burden grows with it.

In Level 3 environments, alarms become structured operational inputs. Known conditions trigger predefined workflows, cascading events are suppressed, and humans intervene only when judgment is required.

The difference between those environments is governance, not telemetry.

The Real Strategic Moat

The competitive advantage in modern infrastructure operations is not more sensors or more dashboards. It’s operational governance at scale. Organizations that achieve this operate with three characteristics:

- A single operational system of record across the portfolio

- Governed workflows instead of local workarounds

- Signals converted into structured operational actions

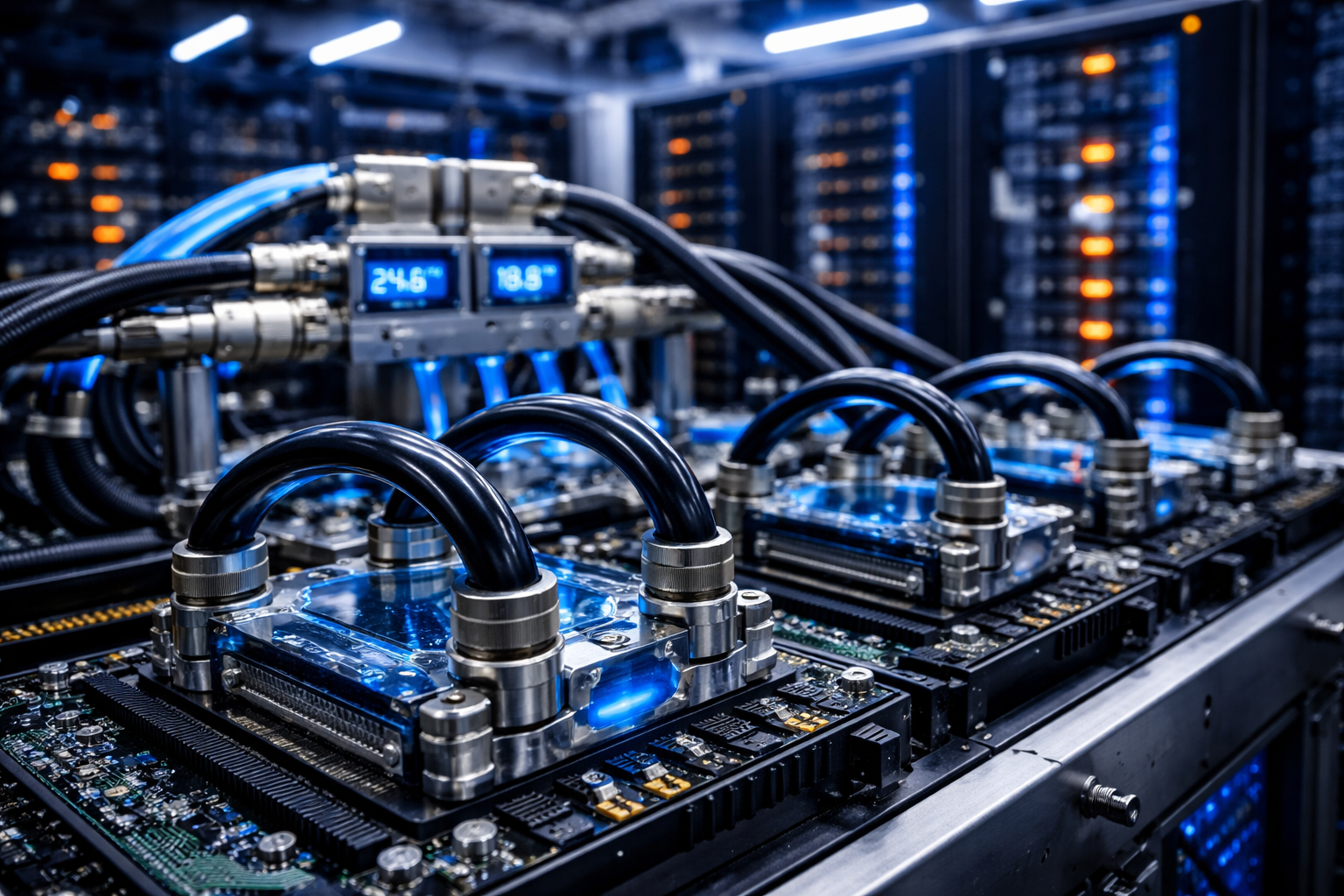

This is where the convergence of IT, OT, and IoT systems becomes critical. Data centers now operate as deeply integrated cyber-physical environments. Mechanical, electrical, and digital systems must operate as one operational model.

Platforms like MCIM exist to make that convergence operationally possible, converting fragmented signals across infrastructure systems into governed execution across the portfolio.

When the system tells a technician to act, it has to be worth their time. Trust in the signal is the foundation of operational discipline.

As AI workloads accelerate infrastructure density and operational complexity, the industry is entering a new phase. Power architectures are changing, cooling systems are evolving, and IT and facility operations are converging faster than ever. In that environment, the organizations that succeed will not be the ones with the most telemetry.

They will be the ones that have built disciplined operating models, progressing deliberately from fragmented operations toward governed, condition-based, and eventually predictive infrastructure management.

More data won’t make data centers stronger, operational maturity will.