Sustained AI workloads increase thermal and operational complexity. In this article, learn what that means for cooling strategy and OpEx, and how teams can maintain performance across evolving infrastructure.

AI/HPC environments are defined by sustained intensity. GPUs don’t idle between bursts of activity, they’re training, inferring, and computing continuously. That steady-state demand tightens redundancy margins and compresses maintenance windows that once provided operational flexibility.

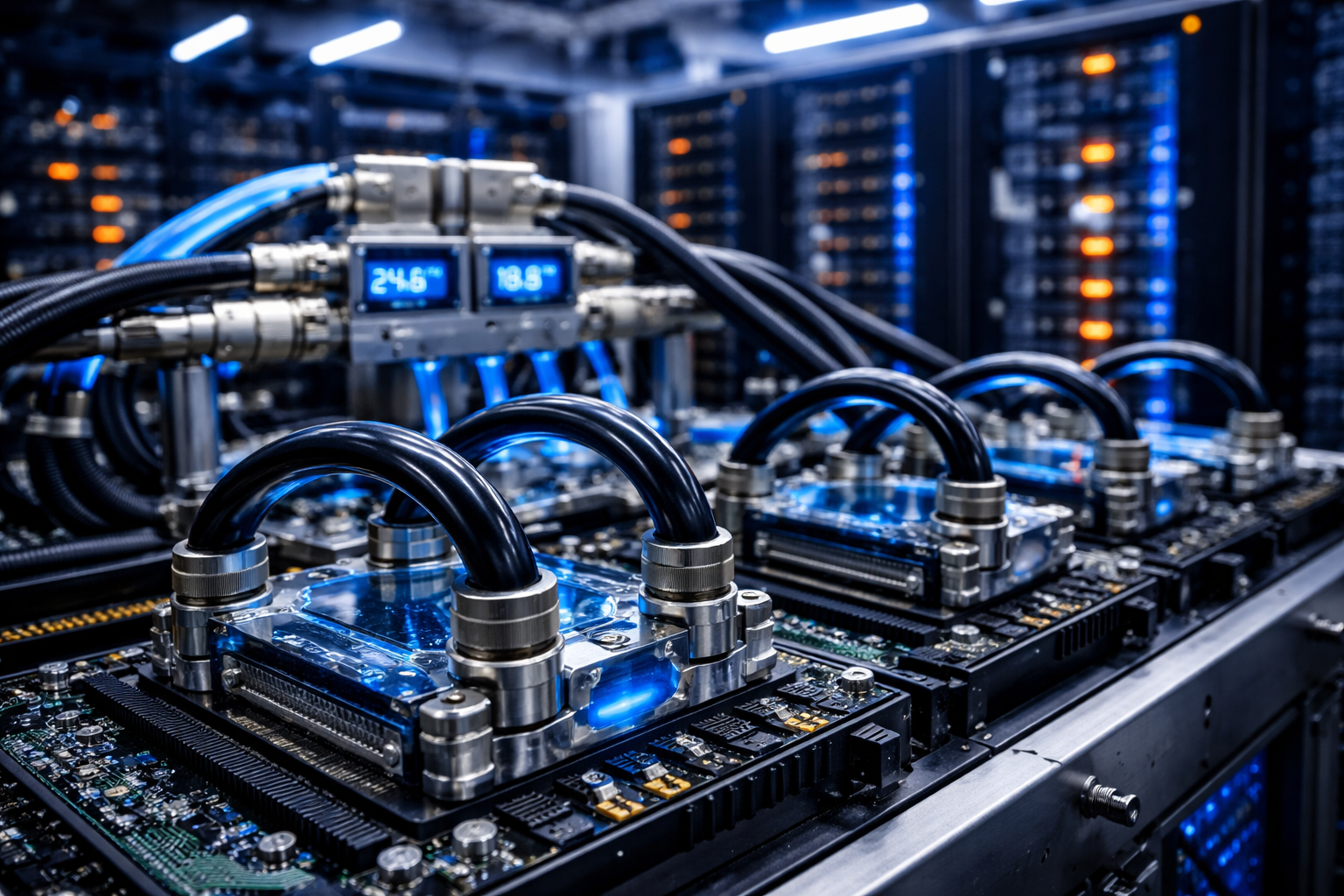

As density rises, so does the thermal load per square foot. Nearly all of that electrical input becomes heat that must be removed continuously and efficiently. Cooling is now tightly integrated with overall performance rather than operating in the background.

Understanding how these pressures compound is essential to building an operational model that can sustain higher density over time.

Density Reshapes Cooling Strategy

At moderate densities, forced-air cooling could manage thermal output when it was within predictable limits. As rack densities have climbed to 100kW, and beyond in many AI/HPC deployments, thermal management requirements have expanded accordingly.

Liquid-based cooling strategies are now common across AI/HPC environments, but their resiliency isn’t instantaneous. Typical CDU failover has a 3-8 minute thermal transient window: detection (10-60s), standby startup (30-120s), then stabilization (2-5 min). If GPUs are already near high thermal bands pre-event, this transient can push workloads into throttling even when redundancy is functioning as designed. In practice, thermal margin before the event often determines whether failover is invisible or costly.

Other cooling approaches include:

- Immersion cooling, which submerges hardware in non-conductive liquid to transfer heat rapidly

- Direct-to-chip cooling, which uses liquid-cooled plates mounted directly to processors

- Rear-door heat exchangers and cooling distribution units, which extend heat rejection deeper into the mechanical chain

Each approach introduces additional operational considerations, with cooling components now representing a larger share of lifecycle management planning. The infrastructure is evolving quickly, and cooling architectures vary across portfolios. As that evolution continues, facilities will likely have operational complexity increases proportionally (think more SKUs in spare parts inventory and more specialized vendor contracts).

Power and Cooling Are Interdependent Systems

In AI/HPC facilities, power density and cooling capacity function as a coupled system: changes in IT load immediately affect thermal demand, cooling efficiency influences power consumption patterns, and maintenance activity within one system can impact performance in another.

These dependencies reduce the margin for error and compress the time between detection and response. Teams need shared visibility across asset condition, work execution history, and performance data in order to make informed decisions.

A recent AI/HPC incident pattern shows why this coupling matters operationally: three UPS static-switch transfer oscillations in 74 seconds didn’t drop IT load, but they degraded CDU pump behavior and reduced flow enough to push GPU inlet temperature from 22.1 C to 28.4 C in 11 minutes. The result was sustained throttling and a failed checkpoint cycle. In high-density environments, this is the failure signature to watch: power disturbance, then cooling degradation, then compute continuity risk.

When technicians perform rounds, document maintenance, or log incidents tied to cooling or power systems, that information becomes part of a continuous, structured dataset. This reduces fragmentation and strengthens coordination between mechanical, electrical, and IT functions.

The Real Metric Is OpEx per Megawatt

Public conversation around AI/HPC development often emphasizes capital deployment with securing megawatts and procuring GPUs being highly visible milestones. Capital cost per megawatt is closely tracked.

Operational cost per megawatt, however, ultimately determines long-term performance.

Why? Because it governs the three largest recurring expense categories in AI/HPC environments: utility consumption, specialized labor, and third-party maintenance. As density rises, utility spend scales with sustained load, staffing requirements increase to support liquid cooling systems, and service contracts expand to cover new mechanical components. If those variables aren’t measured and optimized, margins compress regardless of how efficiently the facility was built.

To make OpEx per MW actionable, track four linked indicators: (1) brownout cost per MW-hour, (2) PM-to-failure ratio, (3) critical alarm acknowledgment latency, and (4) repeat failure rate within 90 days of maintenance. This converts “high operating intensity” into measurable execution quality. A practical baseline is PM-to-failure above 4:1, repeat failures below 5%, and continuous reduction in critical-alarm response delay.

Operators need granular visibility into how infrastructure performs under sustained density. By standardizing maintenance workflows, capturing structured asset data, and automating reporting, they gain measurable insight into cost and reliability trends across the portfolio.

Standardization Across Diverse Architectures

AI/HPC portfolios frequently include multiple cooling approaches deployed across different facilities or halls. Infrastructure diversity increases the importance of standardized execution.

Preventive maintenance procedures need version control to ensure technicians follow current protocols:

- Required data fields help ensure complete documentation.

- Escalation paths should trigger automatically when thresholds are crossed.

- Incident classification logic must remain consistent across sites.

As infrastructure varies, execution remains disciplined and repeatable. Technicians follow configured processes, capture required information, and move tasks forward through automated workflow paths, reducing variability across shifts and locations.

Institutionalizing Operational Learning

AI/HPC infrastructure continues to evolve, and operational learning must evolve with it. Some cooling configurations will prove more efficient over time (while some may introduce higher maintenance frequency or staffing requirements).

Capturing structured performance data allows operators to see how specific cooling configurations impact incident frequency and maintenance demand over time, which directly informs future design and operating decisions.

Institutional learning depends on measurable trends across maintenance frequency, asset performance, failure modes, and cost drivers. When that intelligence is aggregated at the portfolio level, insights from one site inform decisions at another, while strengthening both day-to-day execution and long-term planning.

A Modern Playbook for Sustained Density

AI/HPC facilities introduce higher heat loads, tighter electrical dependencies, and more complex mechanical systems into the operational environment. Managing that complexity requires disciplined workflows, measurable oversight of operational cost, and integrated visibility across cooling, power, and performance.

Capital investment establishes capacity, but sustained operational precision will determine whether that capacity performs reliably and economically over time.

As density continues to increase and infrastructure designs continue to evolve, operators who adopt a structured, data-driven operational model will be positioned to maintain reliability at scale. That’s what a modern AI/HPC playbook requires, and that’s how operational precision becomes the defining variable.